About

I’m a UX researcher with expertise in attention, memory, learning, and behavioral measurement. My work sits at the intersection of cognitive science and product strategy, focused on helping teams understand how people think, learn, and behave.

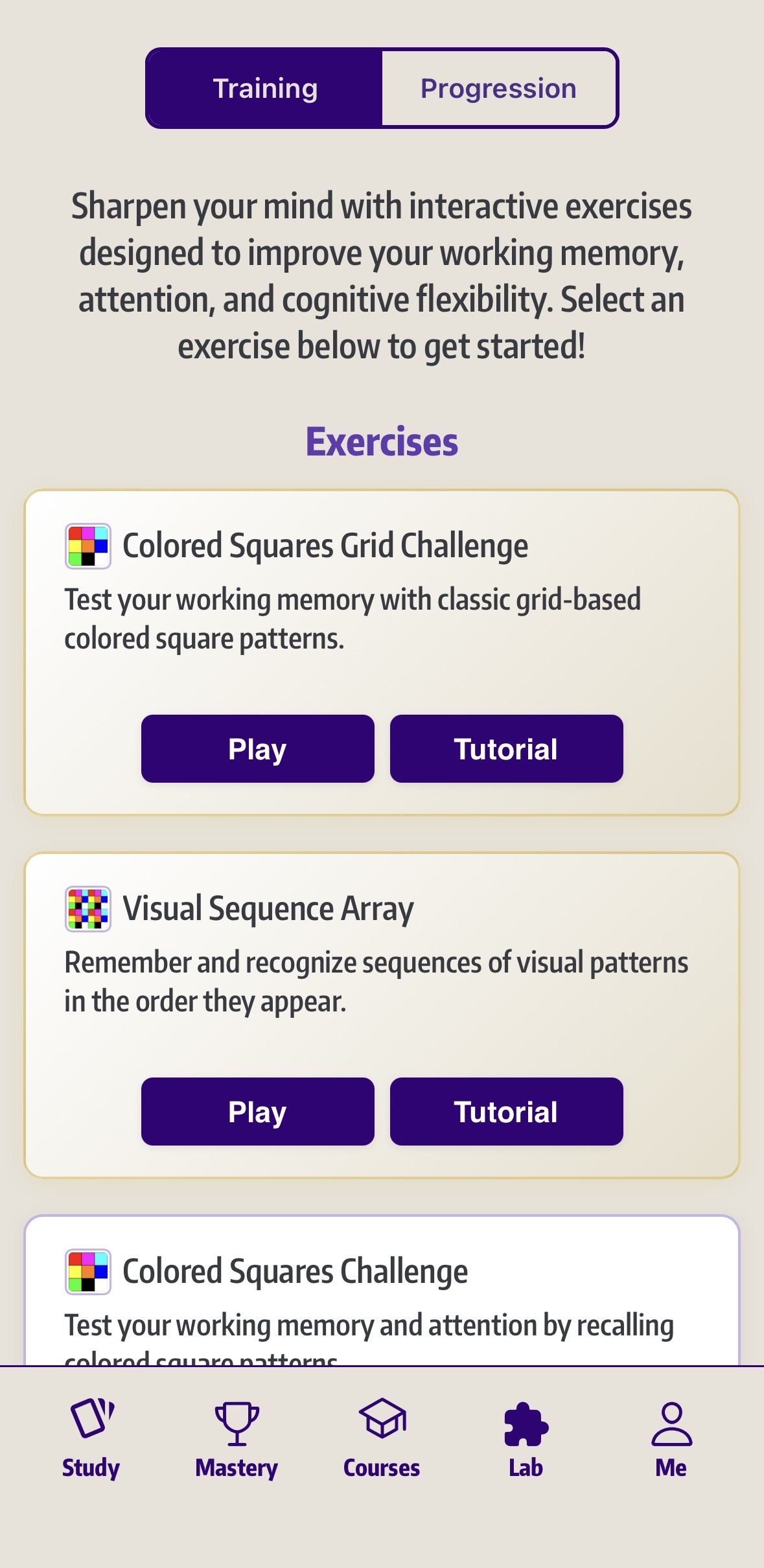

I completed my PhD in Cognitive Neuroscience at the University of Notre Dame, where I studied visual working memory and attention and developed experimental paradigms to better understand how information is encoded, maintained, and affected by cognitive load.

I later worked as a UX Researcher at Microsoft Mixed Reality, investigating perception, cognition, and usability in augmented reality systems to inform interface design and human performance in immersive environments.

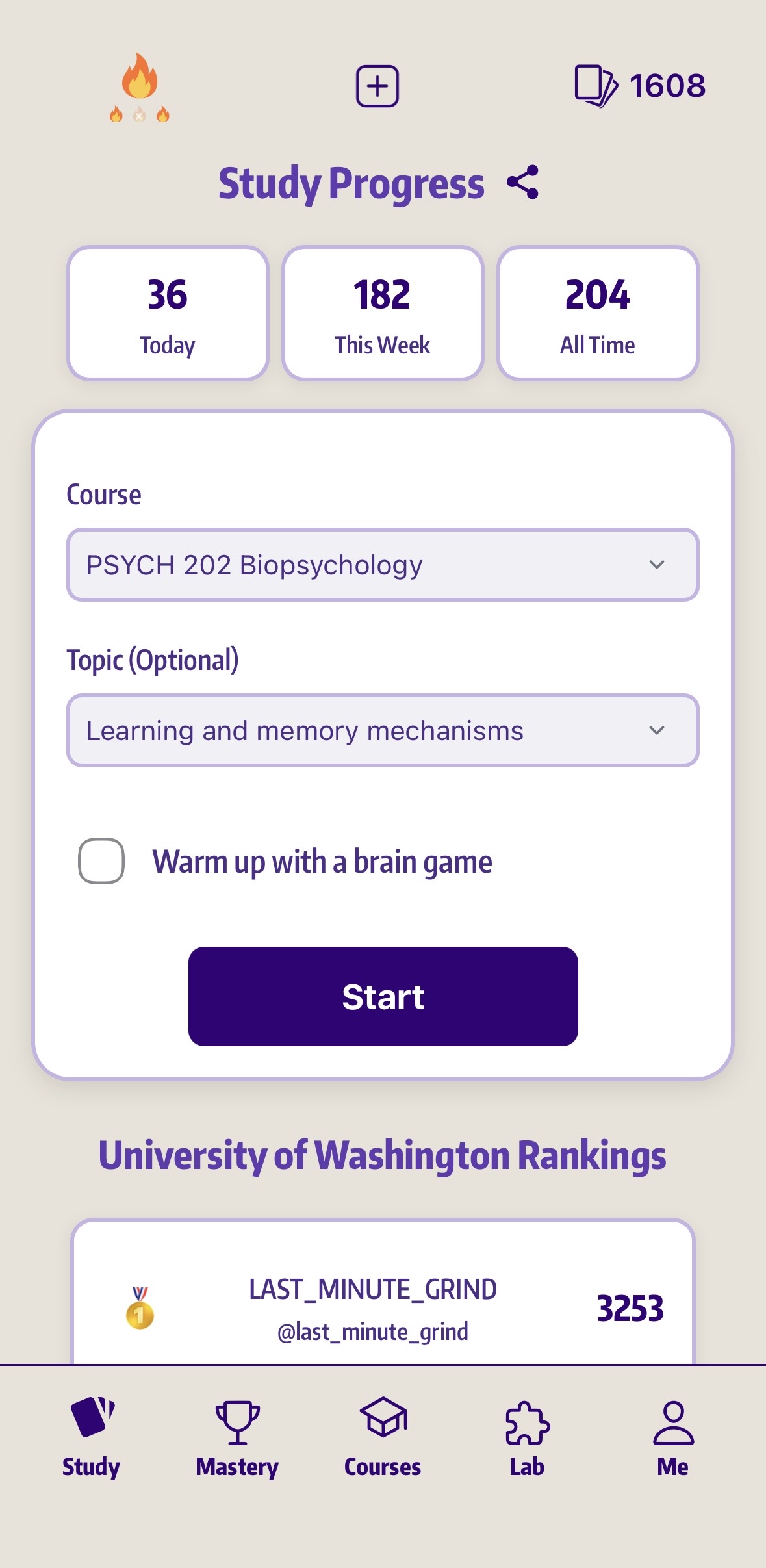

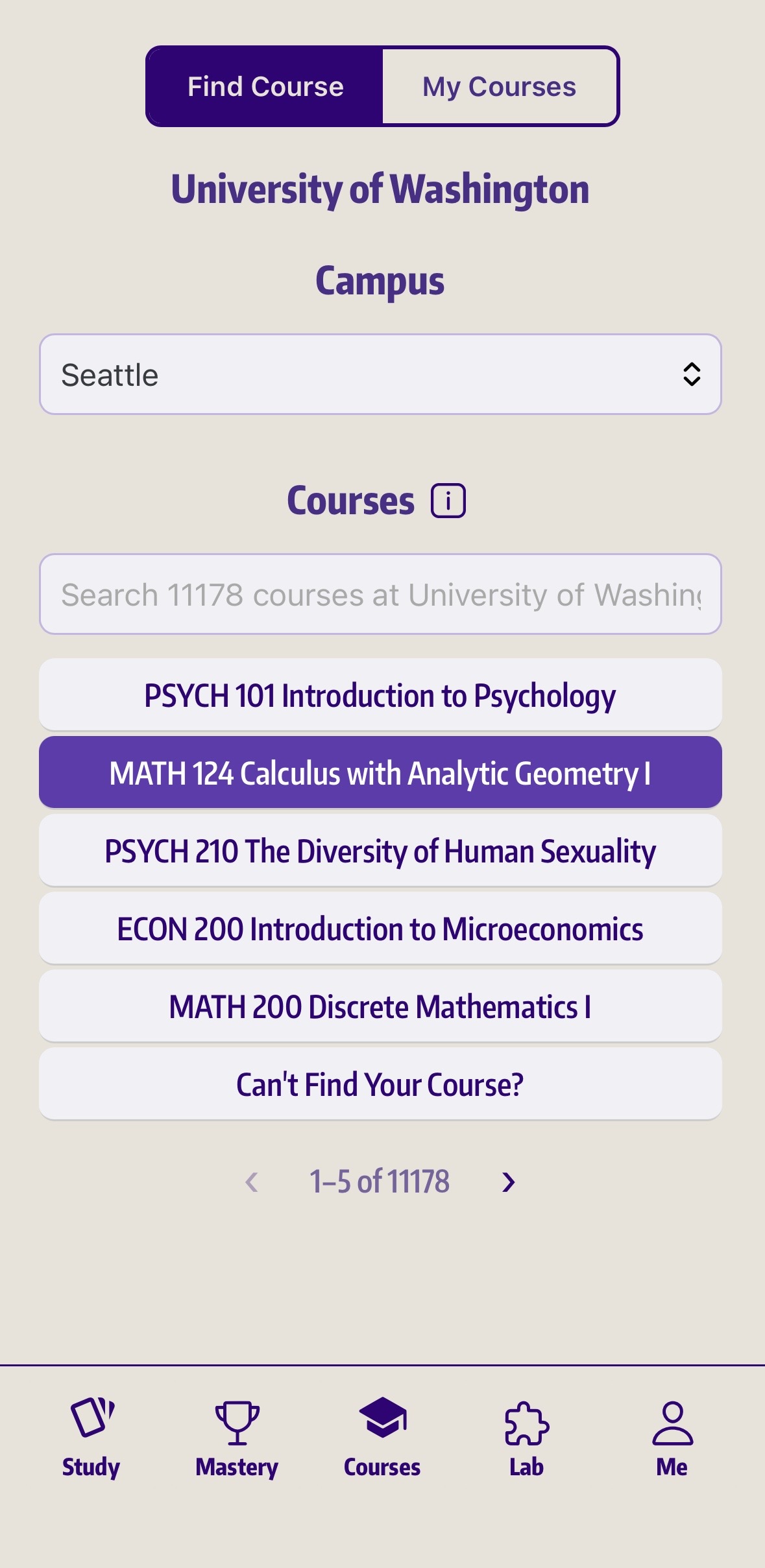

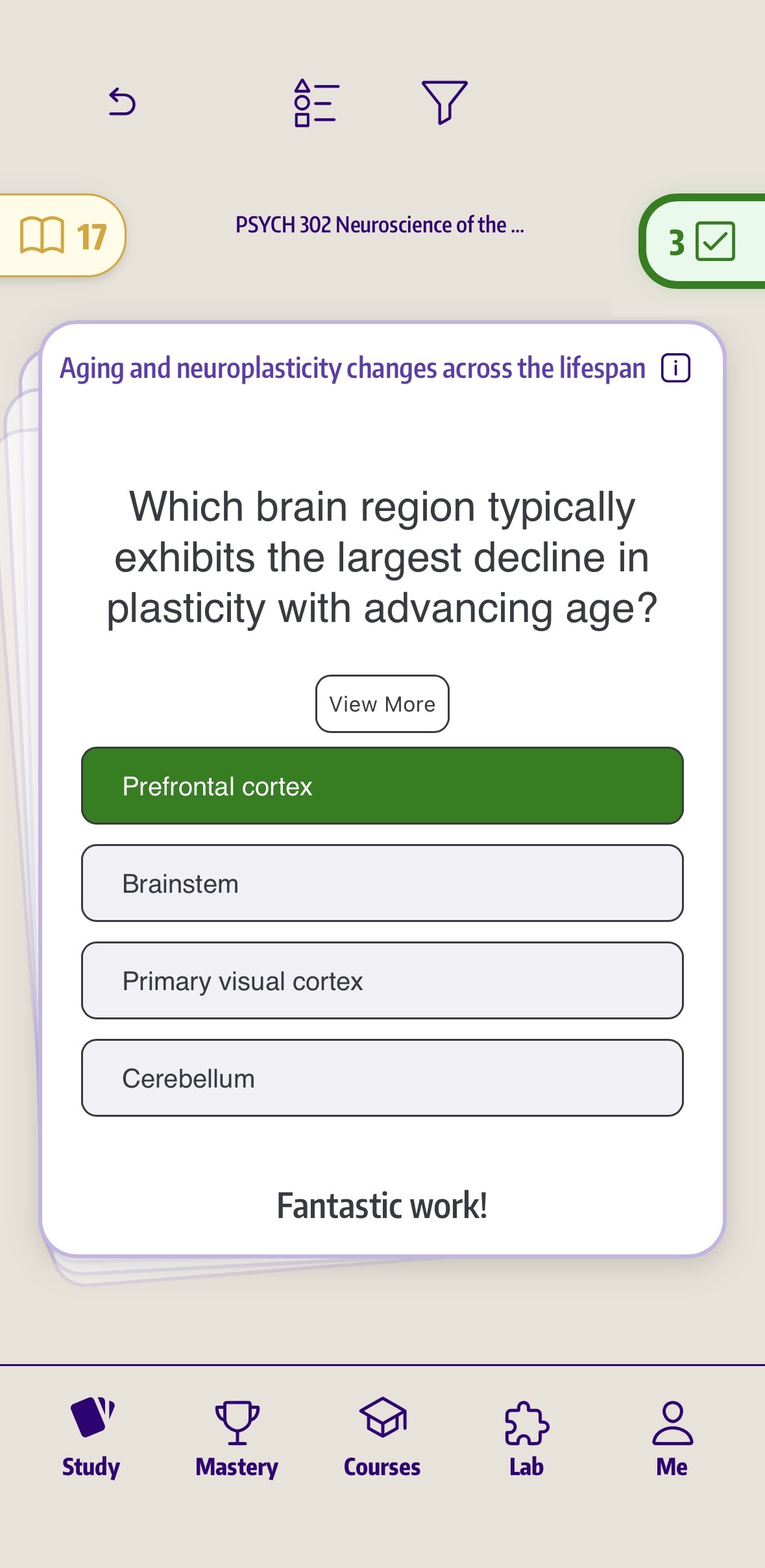

Today, I lead research and product work at Laureata, applying mixed-methods and experimental approaches to learning technology, feature design, pricing, and product direction.

Across roles, my focus has been consistent: turning rigorous research into practical product decisions with clear user and business impact.